Back to: Data Science Tutorials

Hadoop Ecosystem

In this article, I am going to discuss the Hadoop Ecosystem. Please read our previous article, where we discussed Map-Reduce Framework. At the end of this article, you will understand everything about the Hadoop Ecosystem.

Hadoop Ecosystem

Hadoop Ecosystem is a platform or a suite that offers a variety of services to address big data issues. It consists of Apache projects as well as a variety of commercial tools and solutions. HDFS, MapReduce, YARN, and Hadoop Common are the four core components of Hadoop. The majority of the tools or solutions are utilized to complement or support these key components. All of these instruments operate together to provide data absorption, analysis, storage, and maintenance, among other functions.

Hadoop ecosystem is continuously growing to meet the needs of Big Data. It comprises the following twelve components:

- HDFS (Hadoop Distributed file system)

- HBase

- Sqoop

- Flume

- Spark

- Hadoop MapReduce

- Pig

- Impala

- Hive

- Cloudera Search

- Oozie

- Hue

Let us understand the role of each component of the Hadoop ecosystem i.e. Components of the Hadoop Ecosystem. Let us start with the first component HDFS of the Hadoop Ecosystem.

HDFS (HADOOP DISTRIBUTED FILE SYSTEM)

- HDFS is a storage layer for Hadoop.

- HDFS is suitable for distributed storage and processing, that is, while the data is being stored, it first gets distributed and then it is processed.

- HDFS provides Streaming access to file system data.

- HDFS provides file permission and authentication.

- HDFS uses a command-line interface to interact with Hadoop.

So, what stores data in HDFS? It is the HBase that stores data in HDFS.

HBase

- HBase is a NoSQL database or non-relational database.

- HBase is important and mainly used when you need random, real-time, read or write access to your Big Data.

- It provides support to a high volume of data and high throughput.

- In an HBase, a table can have thousands of columns.

We discussed how data is distributed and stored. Now, let us understand how this data is ingested or transferred to HDFS. Sqoop does exactly this.

What is Sqoop?

- Sqoop is a tool designed to transfer data between Hadoop and relational database servers.

- It is used to import data from relational databases (such as Oracle and MySQL) to HDFS and export data from HDFS to relational databases.

If you want to ingest event data such as streaming data, sensor data, or log files, then you can use Flume. We will look at the flume in the next section.

Flume

- Flume is a distributed service that collects event data and transfers it to HDFS.

- It is ideally suited for event data from multiple systems.

After the data is transferred into the HDFS, it is processed. One of the frameworks that process data is Spark.

What is Spark?

- Spark is an open-source cluster computing framework.

- It provides up to 100 times faster performance for a few applications with in-memory primitives as compared to the two-stage disk-based MapReduce paradigm of Hadoop.

- Spark can run in the Hadoop cluster and process data in HDFS.

- It also supports a wide variety of workloads, which includes Machine learning, Business intelligence, Streaming, and Batch processing.

Spark has the following major components:

- Spark Core and Resilient Distributed datasets or RDD

- Spark SQL

- Spark streaming

- Machine learning library or Mlib

- Graphx.

Spark is now widely used, and you will learn more about it in subsequent lessons.

Hadoop MapReduce

- Hadoop MapReduce is the other framework that processes data.

- It is the original Hadoop processing engine, which is primarily Java-based.

- It is based on the map and reduces the programming model.

- Many tools such as Hive and Pig are built on a map-reduce model.

- It has an extensive and mature fault tolerance built into the framework.

- It is still very commonly used but losing ground to Spark.

After the data is processed, it is analyzed. It can be done by an open-source high-level data flow system called Pig. It is used mainly for analytics. Let us now understand how Pig is used for analytics.

Pig

- Pig converts its scripts to Map and Reduce code, thereby saving the user from writing complex MapReduce programs.

- Ad-hoc queries like Filter and Join, which are difficult to perform in MapReduce, can be easily done using Pig.

- You can also use Impala to analyze data.

- It is an open-source high-performance SQL engine, which runs on the Hadoop cluster.

- It is ideal for interactive analysis and has very low latency which can be measured in milliseconds.

Impala

- Impala supports a dialect of SQL, so data in HDFS is modeled as a database table.

- You can also perform data analysis using HIVE. It is an abstraction layer on top of Hadoop.

- It is very similar to Impala. However, it is preferred for data processing and Extract Transform Load, also known as ETL, operations.

- Impala is preferred for ad-hoc queries.

HIVE

- HIVE executes queries using MapReduce; however, a user need not write any code in low-level MapReduce.

- Hive is suitable for structured data. After the data is analyzed, it is ready for the users to access.

Now that we know what HIVE does, we will discuss what supports the search of data. Data search is done using Cloudera Search.

Cloudera Search

- Search is one of Cloudera’s near-real-time access products. It enables non-technical users to search and explore data stored in or ingested into Hadoop and HBase.

- Users do not need SQL or programming skills to use Cloudera Search because it provides a simple, full-text interface for searching.

- Another benefit of Cloudera Search compared to stand-alone search solutions is the fully integrated data processing platform.

- Cloudera Search uses the flexible, scalable, and robust storage system included with CDH or Cloudera Distribution, including Hadoop. This eliminates the need to move large datasets across infrastructures to address business tasks.

- Hadoop jobs such as MapReduce, Pig, Hive, and Sqoop have workflows.

Oozie

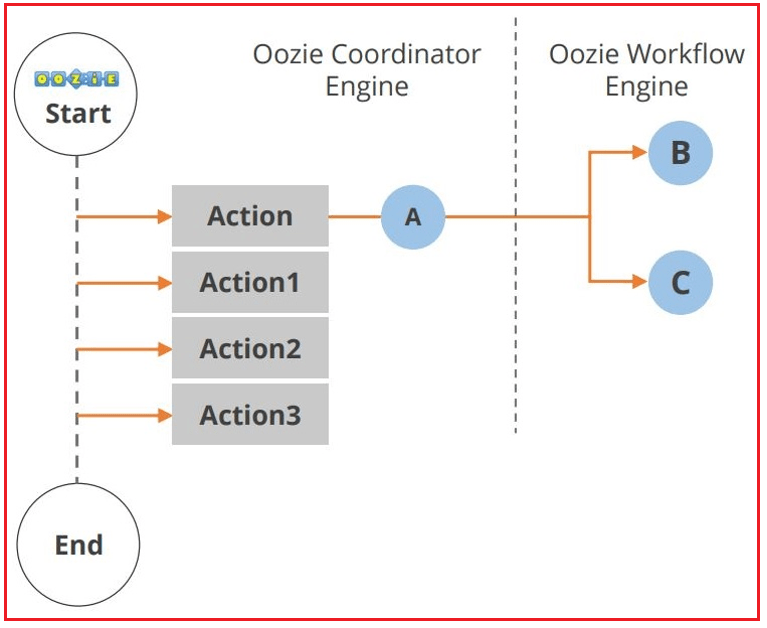

Oozie is a workflow or coordination system that you can use to manage Hadoop jobs. The Oozie application lifecycle is shown in the diagram below.

As you can see, multiple actions occur between the start and end of the workflow. Another component in the Hadoop ecosystem is Hue. Let us look at the Hue now.

Hue

Hue is an acronym for Hadoop User Experience. It is an open-source web interface for Hadoop. You can perform the following operations using Hue:

- Upload and browse data

- Query a table in HIVE and Impala

- Run Spark and Pig jobs and workflows Search data

- All-in-all, Hue makes Hadoop easier to use.

It also provides an SQL editor for HIVE, Impala, MySQL, Oracle, PostgreSQL, SparkSQL, and Solr SQL. After this brief overview of the twelve components of the Hadoop ecosystem, we will now discuss how these components work together to process Big Data.

Stages of Big Data processing

There are four stages of Big Data processing: Ingest, Processing, Analyze, Access. Let us look at them in detail.

Ingest

The first stage of Big Data processing is Ingest. The data is ingested or transferred to Hadoop from various sources such as relational databases, systems, or local files. Sqoop transfers data from RDBMS to HDFS, whereas Flume transfers event data.

Processing

The second stage is Processing. In this stage, the data is stored and processed. The data is stored in the distributed file system, HDFS, and the NoSQL distributed data, HBase. Spark and MapReduce perform the data processing.

Analyze

The third stage is Analyze. Here, the data is analyzed by processing frameworks such as Pig, Hive, and Impala. Pig converts the data using a map and reduces and then analyzes it. Hive is also based on the map and reduce programming and is most suitable for structured data.

Access

The fourth stage is Access, which is performed by tools such as Hue and Cloudera Search. In this stage, the analyzed data can be accessed by users. Hue is the web interface, whereas Cloudera Search provides a text interface for exploring data.

Here, in this article, I try to explain Hadoop Ecosystem and I hope you enjoy this Hadoop Ecosystem article.