Back to: Microsoft Azure Tutorials

Basic Kubernetes Architecture

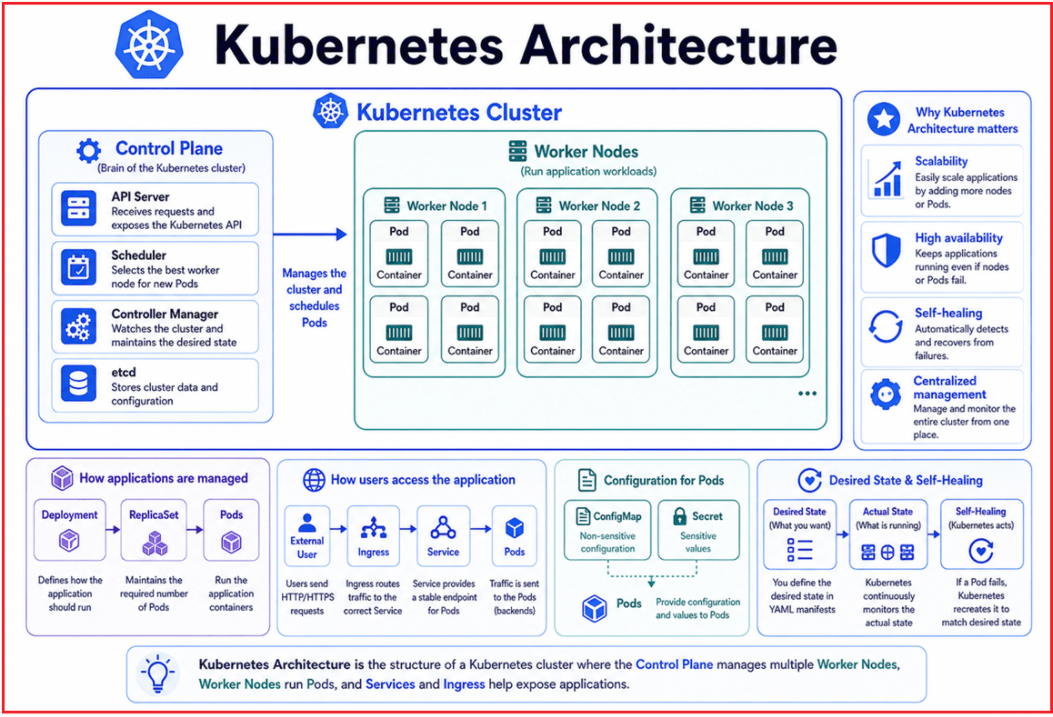

Kubernetes is a platform for running and managing containerized applications. Before learning about AKS deployment, we should understand the basic Kubernetes architecture, including the Cluster, Worker Nodes, Pods, Control Plane, Services, Ingress, ConfigMaps, and Secrets. These components work together to deploy, run, expose, configure, and manage applications reliably.

Basic Terms We Should Know First

Before understanding Kubernetes Architecture, we should first become familiar with a few basic terms. These terms will help us understand how Kubernetes runs and manages containerized applications.

- Docker Image: A Docker Image is a packaged version of an application. It contains the application code, runtime, libraries, configuration files, and other dependencies required to run the application.

- Container: A Container is a running instance of a Docker Image. It provides an isolated environment where the application runs consistently across different machines.

- Pod: A Pod is the smallest deployable unit in Kubernetes. It is the Kubernetes-managed object that contains one or more containers and provides networking, storage access, lifecycle, and scheduling context for those containers.

- Node: A Node is a machine inside the Kubernetes cluster where Pods are scheduled and executed. It can be a physical server, virtual machine, or cloud-based virtual machine.

- Control Plane: The Control Plane is the management part of Kubernetes. It makes cluster-level decisions, such as where Pods should run, monitors the cluster, and maintains the desired state.

- Cluster: A Cluster is the complete Kubernetes environment made up of the Control Plane and Worker Nodes. It manages and runs containerized applications as a single system.

Three Logical Layers of Kubernetes Architecture

Kubernetes follows a cluster-based architecture where multiple components work together to run containerized applications efficiently. For easier understanding, we can group Kubernetes components into three logical layers:

1. Infrastructure-Level Components

These components provide the foundation on which applications run and on which Kubernetes makes cluster-level decisions.

- Cluster

- Control Plane

- Worker Nodes

2. Application Running-Level Components

These components are responsible for running application containers, keeping the required number of application copies alive, and safely updating the application.

- Pods

- Deployments

- Replicas and ReplicaSets

3. Application Access and Configuration-Level Components

These components help users access the application and enable it to receive configuration values without hardcoding them in the Docker image.

- Services

- Ingress

- ConfigMaps

- Secrets

In simple words, first we need machines. Then we need application containers running on those machines. After that, we need a stable way to access those applications and provide configuration values. Kubernetes connects all these parts together and continuously tries to keep the application running in the desired state. For a better understanding, please have a look at the following diagram:

Real-Life Analogy

Think of Kubernetes architecture like a company. A company has management, departments, employees, rules, resources, and communication systems. Everyone has a role. The company works smoothly only when all these parts work together.

Similarly, Kubernetes has different components. Each component has a specific responsibility. The control plane makes decisions; worker nodes provide computing resources; Pods run containers; Services expose applications; and configuration objects provide settings. Together, they help run containerized applications properly.

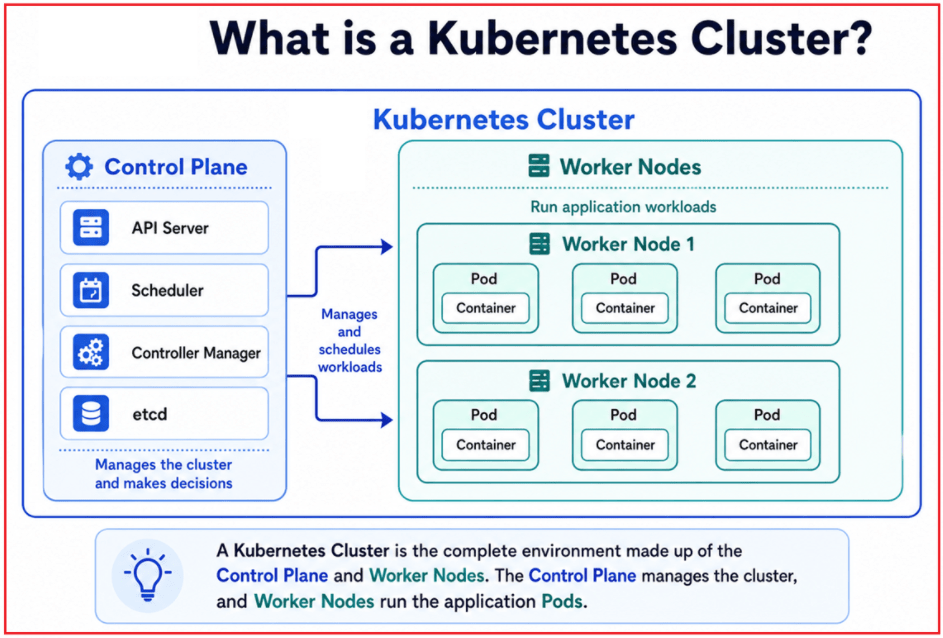

What is a Kubernetes Cluster?

A Kubernetes Cluster is the complete environment for running containerized applications. It is made up of machines and Kubernetes components that work together as one system. A cluster usually contains two major parts:

- Control Plane: The control plane manages the cluster.

- Worker Nodes: The worker nodes are responsible for running the actual application workloads

For a better understanding, please have a look at the following image:

Why Do We Need a Cluster?

In small applications, one server may be enough. But real-time applications require better availability, scalability, and fault handling. A cluster allows us to use multiple machines together so that applications can be distributed across them.

For example, if our application has multiple services such as Product API, Order API, Payment API, and Notification API, Kubernetes can run them across different worker nodes. If one node becomes unhealthy, Kubernetes can try to run the affected workloads on another healthy node, depending on available capacity and configuration.

How a Cluster Works

When we submit a deployment request to Kubernetes, the Control Plane reads the requested state and decides where the Pods should run. The selected Worker Nodes then run those Pods. Kubernetes keeps checking the cluster continuously. If the actual running state does not match the desired state, Kubernetes tries to correct it.

In simple words:

- The control plane manages the cluster.

- Worker nodes run the actual application workloads.

- Kubernetes continuously checks whether the running system matches what we requested.

Real-Life Analogy

A Kubernetes Cluster is like an entire hospital. A hospital has many departments such as emergency, surgery, pharmacy, billing, laboratory, and patient rooms. All departments work together under one hospital system.

Similarly, a Kubernetes Cluster contains many machines and components that work together to run applications. The control plane is like the hospital administration, and worker nodes are like different departments where the actual work happens.

Key Points to Remember:

- A cluster is the complete Kubernetes environment.

- A cluster contains the control plane and worker nodes.

- The control plane manages decisions and maintains the desired state.

- Worker nodes execute application workloads.

- Applications run inside Pods on worker nodes.

What is a Worker Node in Kubernetes?

A Worker Node is a machine where application workloads run. A node can be a physical server, a virtual machine, or a cloud-based virtual machine. In Azure Kubernetes Service, worker nodes are usually Azure Virtual Machines.

Worker nodes provide the resources required by applications, such as CPU, Memory, Storage, and Networking. When Kubernetes schedules a Pod, that Pod is placed on one of the available worker nodes.

A node can be:

- A Physical Server: A physical server is a real hardware machine in a data center where Kubernetes can run Pods.

- A Virtual Machine: A virtual machine is a software-based machine created on top of physical hardware, on which Kubernetes can run Pods.

- A Cloud-Based Virtual Machine: A cloud-based virtual machine is a VM provided by cloud platforms such as Azure, AWS, or Google Cloud. In AKS, Worker Nodes are usually Azure Virtual Machines.

For example, if our AKS cluster has three worker nodes, Kubernetes can distribute application workloads across those nodes. If one node becomes unhealthy, Kubernetes can recreate or reschedule the affected Pods on another healthy node, depending on capacity and configuration. For a better understanding, please have a look at the following image:

Why Do We Need Worker Nodes?

Applications need:

- CPU: CPU provides the processing power required to execute the application code.

- Memory: Memory stores the temporary data required while the application is running.

- Storage: Storage is used to persistently store application data, files, logs, and other required information.

- Networking: Networking allows the application to communicate with users, other applications, databases, and external services

Worker nodes provide these resources. The control plane only manages the cluster. It does not usually run our business application containers. Worker nodes provide the actual computing power where our ASP.NET Core Web API, background services, and other containers run.

Real-Life Analogy

Think of a node as a worker in a factory. A factory may have many workers. Each worker has some capacity. One worker can handle only a limited amount of work. If work increases, the factory needs more workers.

Similarly, the factory is the cluster, and each worker is a node. A Kubernetes cluster may have many nodes. Each node has CPU and memory capacity to run application Pods.

Key Points to Remember:

- A node is a machine inside the Kubernetes cluster.

- Worker nodes run application Pods.

- In AKS, worker nodes are usually Azure Virtual Machines.

- Each node provides CPU, memory, storage, and networking resources.

- More nodes provide more capacity to run workloads.

What Is a Pod in Kubernetes?

A Pod is the smallest deployable unit in Kubernetes. It represents one or more containers that share the same network, storage, and lifecycle context. In Docker, we usually think in terms of containers. In Kubernetes, we usually think in terms of Pods.

The Container provides the application runtime environment, such as runtime, libraries, dependencies, and application files. The Pod provides the Kubernetes runtime context for the container, including networking, storage, lifecycle management, configuration, secrets, health checks, and scheduling, on a Worker Node.

Most of the time, a Pod contains a single main application container. For example, our ASP.NET Core Web API container typically runs inside a Pod.

So, the relationship is:

- Application code is packaged as a Docker image.

- Kubernetes runs that image as a container.

- The container runs inside a Pod.

- The Pod runs on a worker node.

For a better understanding, please have a look at the following image:

Why Do We Need Pods?

Kubernetes does not deploy standalone containers directly as the basic unit. Instead, Kubernetes deploys and manages Pods, and containers run inside those Pods:

- Networking Context: Pods provide a shared network identity (IP and ports) so containers can communicate easily within the cluster.

- Storage Access: Pods allow containers to share and use storage volumes for data persistence.

- Lifecycle Management: A Pod allows Kubernetes to start, stop, restart, recreate, and monitor the containers running inside it.

How Pods Work

When we create a Deployment, Kubernetes creates Pods based on the desired state. Each Pod gets its own IP address inside the cluster. If the Pod fails or is deleted, Kubernetes can create a new Pod to replace it.

Note: Pods are temporary. Kubernetes can delete, recreate, replace, or move Pods when required. When a Pod is recreated, it may get a new IP address. That is why we should not directly depend on Pod IP addresses. Instead, we use a Kubernetes Service to access Pods in a stable way.

Real-Life Analogy

A Pod is like a small office cabin where one employee works with all the required tools. The employee is like the application container. The cabin provides space, network, power, and environment for the employee to work.

Similarly, a Pod provides the Kubernetes-managed environment around the container. The container runs the application, but Kubernetes manages the Pod.

Key Points to Remember:

- A Pod is the smallest deployable unit in Kubernetes.

- A Pod usually contains one main application container.

- Kubernetes manages Pods, not just standalone containers.

- Pods are temporary and can be recreated with new IP addresses.

- Pods run on worker nodes.

What is the Control Plane in Kubernetes?

The Control Plane is the brain of Kubernetes. It manages the entire Kubernetes cluster and makes important decisions such as where Pods should run, how many Pods should be active, and what should happen when something fails. It is responsible for:

- Managing the cluster state.

- Accepting requests from users.

- Deciding where Pods should run.

- Monitoring the health of applications and nodes.

- Responding to failures.

- Managing scaling and deployment updates.

- Maintaining the desired state of the cluster.

For a better understanding, please have a look at the following image:

Why Do We Need the Control Plane?

Because:

- Someone must decide where applications run

- Someone must monitor health

- Someone must handle failures and scaling

These responsibilities are handled by the Control Plane in Kubernetes. Without the Control Plane, Kubernetes would not know what should run in the cluster, where it should run, or how to recover when something goes wrong.

For example, when we say “run three Pods of Product API,” the control plane understands this desired state and coordinates with worker nodes to achieve it. If one Pod fails, the control plane detects the mismatch and starts the recovery process.

Important Control Plane Components

As a beginner, you do not need to master every internal component immediately, but it is useful to know the main responsibilities:

- API Server: The API Server is the main entry point of Kubernetes and receives all requests from users, tools, and other Kubernetes components.

- Scheduler: The Scheduler decides on which Worker Node a newly created Pod should run based on available resources and rules.

- Controller Manager: The Controller Manager continuously checks the cluster and takes action when the actual state does not match the desired state.

- etcd: etcd is Kubernetes’s storage system that stores cluster data, configuration, and current state.

In AKS, Azure manages much of the control plane complexity for us. This is one of the major benefits of using Azure Kubernetes Service. We mainly focus on deploying and managing workloads, while Azure handles many control-plane responsibilities.

Real-Life Analogy

The Control Plane is like the principal of a school. The principal does not teach every class directly, but manages the school, assigns responsibilities, checks whether everything is working properly, and makes decisions when there is a problem. Similarly, the Kubernetes Control Plane manages the cluster, but the actual applications run on worker nodes.

Key Points to Remember:

- The control plane is the brain of Kubernetes.

- It manages cluster decisions and the desired state.

- It decides where Pods should run.

- It watches the current state and takes corrective action when required.

- In AKS, Azure manages much of the control plane complexity.

What are the Desired State and Actual State in Kubernetes?

One of the most important concepts in Kubernetes is the desired state. Kubernetes works by continuously comparing what we want with what is currently running in the cluster.

- Desired state: What we want the system to look like.

- Actual state: What is currently running in the cluster?

For example, suppose we tell Kubernetes:

- Run 3 Pods of Product API.

- Use this Docker image.

- Expose the application through a Service.

This is the desired state. Kubernetes then checks the actual state. If only 2 Pods are running, Kubernetes creates one more Pod. If one Pod fails, Kubernetes replaces it. If the image version changes, Kubernetes updates the running Pods according to the deployment strategy.

Why is the Desired State Important?

The desired state is the foundation of Kubernetes automation. We do not normally tell Kubernetes every manual step. Instead, we describe the final state we want, and Kubernetes continuously works to reach and maintain that state.

This is different from traditional server management, where an administrator may manually log in to a server, start a process, restart a service, or copy files. Kubernetes works declaratively. We declare what we want, and Kubernetes handles the required actions.

So, we need a Desired State because it helps Kubernetes determine what should be running and what actions to take when the actual state changes.

- Self-Healing: Desired State helps Kubernetes automatically recreate or restart Pods when they fail.

- Automatic Scaling: Desired State helps Kubernetes increase or decrease the number of Pods based on the required replica count or scaling rules.

- Continuous Monitoring: Desired State allows Kubernetes to continuously compare what we want with what is currently running in the cluster.

How Kubernetes Maintains the Desired State

Kubernetes controllers continuously watch the cluster. They compare the desired state stored in Kubernetes with the actual state running on worker nodes. If there is a mismatch, Kubernetes takes corrective action.

For example:

- If the desired state is 3 Pods but only 2 are running, Kubernetes creates another Pod.

- If a Pod becomes unhealthy, Kubernetes can replace it.

- If a new image version is applied, Kubernetes updates Pods gradually.

- If we scale from 3 replicas to 5 replicas, Kubernetes creates 2 additional Pods.

Real-Life Analogy

Think of the desired state like a restaurant manager’s instruction: keep 5 billing counters open during lunch time. If only 4 counters are open, the supervisor arranges one more counter. If 6 counters are open unnecessarily, one counter may be closed.

Similarly, Kubernetes continuously checks whether the actual running system matches what we requested. Kubernetes continuously tries to make the actual state match the desired state.

Key Points to Remember:

- The desired state means what we want Kubernetes to maintain.

- Actual state means what is currently running.

- Kubernetes continuously compares the desired state and the actual state.

- If there is a mismatch, Kubernetes tries to fix it.

- This concept is the foundation of self-healing and scaling in Kubernetes.

What is a Deployment in Kubernetes?

A Deployment is a Kubernetes object used to deploy and manage application Pods. It defines the desired state of an application, including which container image to use, how many copies to run, and how updates should be applied. In simple words, a Deployment is a blueprint for running Pods. A Deployment manages ReplicaSets, and ReplicaSets manage the required number of Pods.

A Deployment tells Kubernetes:

- Which container image to use?

- How many copies of the application should run?

- Which ports should be exposed inside the container?

- How updates should be applied.

- How to maintain the desired state.

For example, suppose we want to run three copies of our ASP.NET Core Web API. We do not manually create three Pods one by one. Instead, we create a Deployment and tell Kubernetes to use this Docker image, run three replicas, keep them running, recreate failed Pods, and update the application safely when a new version is released. For a better understanding, please have a look at the following image:

Why Do We Need Deployment?

Without Deployment:

- We may need to manage Pods manually.

- Application updates become difficult.

- Rolling updates and rollbacks are not easy.

- Managing replicas becomes less convenient.

- Production-level application management becomes harder.

How Deployment Works

- We create a deployment

- The Deployment creates a ReplicaSet

- The ReplicaSet creates the required number of Pods

- Pods run the application containers.

Real-Life Analogy

A Deployment is like an instruction given to a factory supervisor. For example, the factory owner says:

- Produce 3 identical machines.

- Keep them running.

- If one machine stops working, replace it.

- If a new model comes, update the machines gradually.

The supervisor follows these instructions and maintains the expected output.

Similarly, a Kubernetes Deployment tells Kubernetes which image to use, how many Pods to run, how to update the application, and how to maintain the desired state.

Key Points to Remember:

- Deployment is used to deploy and manage application Pods.

- Deployment defines image, replicas, ports, and update strategy.

- We usually create Deployments instead of creating Pods manually.

- Deployment manages ReplicaSets behind the scenes.

- Deployment helps Kubernetes maintain the desired state.

What are Replica and ReplicaSet in Kubernetes?

In Kubernetes, Replica and ReplicaSet are closely related concepts. A Replica means a running copy of an application Pod, while a ReplicaSet ensures the required number of replicas are always running. For example, suppose we want to run three copies of our ASP.NET Core Web API application. In Kubernetes, this means we want three Pods running the same application image. Each running Pod is called one replica.

So, if we say we need 3 replicas, it means Kubernetes should keep 3 running Pods of the same application. For example:

- Replica 1: Product API Pod

- Replica 2: Product API Pod

- Replica 3: Product API Pod

All three Pods run the same application image, but they are separate running instances. For a better understanding, please have a look at the following image:

Why Do We Need Replicas?

In real-time applications, running only one Pod is risky. If that single Pod fails, the application may become unavailable. Also, one Pod may not be enough to handle high traffic. Replicas help solve this problem by running multiple copies of the same application.

We need replicas for:

- Handling more user requests

- Improving application availability

- Reducing downtime

- Distributing traffic across multiple Pods

- Recovering from Pod failures

For example, suppose only one Product API Pod is running. If that Pod crashes, users cannot access the Product API until it is restarted or recreated. But if three Product API Pods are running and one Pod fails, the remaining two Pods can still handle user requests. Kubernetes can then create another Pod to bring the count back to three.

What is a ReplicaSet?

A ReplicaSet is a Kubernetes object that ensures the required number of Pod replicas are running. For example, if the desired replica count is 3, the ReplicaSet continuously checks whether 3 Pods are running. If one Pod fails and only 2 Pods are available, the ReplicaSet creates one more Pod.

So, ReplicaSet acts like a controller that maintains the required number of Pods.

In simple words:

- A replica means one running copy of a Pod.

- ReplicaSet means the manager that maintains the required number of replicas.

How Replica and ReplicaSet Work Together

Let us understand this with an example. Suppose we create a Deployment and mention that we need 3 replicas of the Product API. Kubernetes creates a ReplicaSet for this Deployment. The ReplicaSet then creates 3 Pods.

Now the running state is:

- Product API Pod 1

- Product API Pod 2

- Product API Pod 3

If Product API Pod 2 crashes, the actual running count becomes 2. But the desired count is still 3. The ReplicaSet notices this mismatch and creates a new Pod. After recovery, the running state may become:

- Product API Pod 1

- Product API Pod 3

- Product API Pod 4

The failed Pod is not necessarily repaired with the same name or the same IP address. Instead, Kubernetes creates a replacement Pod. This is why Pods are considered temporary.

Relationship Between Deployment, ReplicaSet, Replica, Pod, and Container

In real projects, we usually do not create ReplicaSets directly. We create a Deployment, and Kubernetes automatically creates a ReplicaSet behind the scenes.

The relationship is:

- Deployment manages ReplicaSet.

- ReplicaSet maintains the required number of replicas.

- Each replica is one running Pod.

- Pod runs the application container.

- Container runs our actual application.

So, the flow is: Deployment → ReplicaSet → Replicas/Pods → Containers → Application

For example:

- Deployment says: “Run 3 copies of Product API.”

- ReplicaSet ensures: “Exactly 3 Product API Pods should be running.”

- Pods run: “Product API application containers.”

How ReplicaSet Identifies Pods

A ReplicaSet uses labels and selectors to identify which Pods belong to it. For example, Product API Pods may have a label like: app=product-api. The ReplicaSet uses this label to find and manage the correct Pods.

If the ReplicaSet expects 3 Pods with the label app=product-api and only 2 are running, it creates one more matching Pod. If 4 matching Pods are running, it may remove one Pod to bring the count back to 3. This is how ReplicaSet maintains the desired number of application Pods.

Real-Life Analogy

Think of replicas like billing counters in a supermarket. Suppose the supermarket manager says, “Always keep 3 billing counters open.”

Here:

- Each billing counter is like a replica.

- The supervisor is like the ReplicaSet.

- The manager’s instruction is like the desired state.

If one cashier leaves the counter, only 2 counters are active. The supervisor notices this and arranges another cashier so that 3 counters are open again.

Similarly, in Kubernetes, if one Pod fails, the ReplicaSet creates another Pod to maintain the required number of replicas.

Key Points to Remember:

- A Replica means one running copy of an application Pod.

- If we say 3 replicas, it means 3 Pods of the same application should run.

- A ReplicaSet ensures that the required number of replicas are always running.

- If one Pod fails, the ReplicaSet creates another Pod.

- ReplicaSet uses labels and selectors to identify the Pods it manages.

- In real projects, we usually create Deployments directly, not ReplicaSets.

- Deployment manages ReplicaSets, and ReplicaSets manage Pods.

What is Self-Healing in Kubernetes?

Self-healing means Kubernetes automatically tries to recover the application when something goes wrong. It is one of the biggest reasons Kubernetes is used in production environments. Let us understand with an example. Suppose we have a Deployment with three replicas:

- Product API Pod 1

- Product API Pod 2

- Product API Pod 3

Now, suppose Pod 2 crashes.

- Without Kubernetes, someone may need to manually check and restart the container.

- With Kubernetes, the system notices that the desired state is 3 Pods, but only 2 are running.

So, Kubernetes automatically creates a new Pod. After recovery, we may have:

- Product API Pod 1

- Product API Pod 3

- Product API Pod 4

The name or IP address may change, but the application remains available through the Service. This is self-healing. For a better understanding, please have a look at the following image:

Why Do We Need Self-Healing?

In production, failures are normal. A container can crash, a Pod can become unhealthy, or a node can fail. If every failure requires manual intervention, the application becomes difficult to operate. Kubernetes reduces this manual work by automatically attempting to restore the system to its desired state. Self-healing improves application availability because Kubernetes reacts to failures quickly and consistently.

How Self-Healing Works

Kubernetes constantly compares the desired state with the actual state:

- Desired state: What we want.

- Actual state: What is currently running?

If the actual state does not match the desired state, Kubernetes tries to fix it. For example:

- If a Pod crashes, Kubernetes can restart or replace it.

- If a node becomes unavailable, Kubernetes can reschedule Pods on healthy nodes depending on capacity and configuration.

- If the required replica count is not met, Kubernetes creates new Pods.

- If health probes fail, Kubernetes can stop sending traffic to the unhealthy Pod or restart it depending on the probe type.

Real-Life Analogy

Self-healing is like an automatic backup generator in a hospital. If the main electricity supply fails, the hospital cannot stop working. The backup generator starts automatically and keeps important systems running. Similarly, if a Pod fails, Kubernetes automatically creates another Pod or restarts the workload to keep the application running.

Key Points to Remember:

- Self-healing means automatic recovery.

- Kubernetes replaces or restarts failed Pods.

- Kubernetes tries to maintain the desired state.

- Health probes help Kubernetes identify unhealthy applications.

- Self-healing improves availability and reduces manual intervention.

What Is a Service in Kubernetes?

A Service in Kubernetes provides a stable way to access Pods. Pods are temporary. They can be created, deleted, restarted, or replaced. When this happens, the Pod IP address may change.

Now imagine this situation:

- Product API Pod 1 has one IP address.

- It crashes.

- Kubernetes creates a new Pod.

- The new Pod gets a different IP address.

If users or other applications directly depend on Pod IP addresses, the system becomes unreliable. This is why Kubernetes provides a Service.

A Kubernetes Service typically provides a stable virtual IP address and a DNS name. Other applications can call the Service name instead of calling individual Pod IP addresses.

For example, if we have three Product API Pods, a Kubernetes Service can expose them as one stable application endpoint. Instead of calling each Pod directly, users or other services call the Kubernetes Service. The Service then forwards traffic to one of the available Pods. For a better understanding, please have a look at the following image:

Why Do We Need a Service?

Pods are not permanent, and their IP addresses are not reliable for long-term communication. A Service solves this problem by providing a stable access point for a group of Pods.

In a microservices application, the Order API may need to call the Product API. The Order API should not call a specific Product API Pod IP. Instead, it should call the Product Service name. Kubernetes then forwards the request to one of the healthy Product API Pods.

How Service Works

A Service uses labels and selectors to find the Pods it should send traffic to. For example, if Product API Pods have the label app=product-api, the Product Service can use that label to identify those Pods.

The request flow is usually like this:

- A user or another service calls the Kubernetes Service.

- The Service identifies the matching Pods using labels.

- The Service forwards traffic to one of the available Pods.

- The selected Pod processes the request.

Common Service Types in Kubernetes

Kubernetes provides different types of Services depending on whether the application should be accessed only within the cluster or from outside it.

- ClusterIP – Internal Cluster Access: ClusterIP exposes the application only within the Kubernetes cluster, so it is primarily used when one internal service needs to communicate with another.

- NodePort – Access Through Worker Node Port: NodePort exposes the application using a fixed port on each Worker Node, so it is mostly useful for learning, testing, or simple access scenarios.

- LoadBalancer – External Access Through Cloud Load Balancer: LoadBalancer exposes the application to external users via a cloud load balancer; in AKS, it can automatically create an Azure Load Balancer.

Real-Life Analogy

A Kubernetes Service is like a restaurant reception desk. When customers enter a restaurant, they do not directly go to a specific waiter or kitchen staff. They go to the reception or ordering counter. The reception then forwards the request to the available waiter or kitchen section.

Similarly, users should not directly call individual Pods because Pods are temporary and their IP addresses can change. Instead, users or other applications call the Service. The Service forwards the request to one of the available Pods.

Key Points to Remember:

- Pods are temporary, and Pod IP addresses can change.

- Service provides a stable endpoint for accessing Pods.

- Service forwards traffic to available Pods.

- Service uses labels and selectors to find matching Pods.

- Pods run the application, but Services expose the application.

What Is Ingress in Kubernetes?

A Service in Kubernetes provides a stable way to access Pods. But when we want to expose an application to external users using a proper domain name, URL path, or HTTPS, then using only a Service may not be enough. This is where Ingress comes into the picture.

Ingress is a Kubernetes object that defines rules for external HTTP and HTTPS access to applications running inside the cluster. These rules are implemented by an Ingress Controller.

In simple terms, Ingress defines routing rules, and the Ingress Controller acts as a smart entry point for web traffic. The Ingress Controller receives user requests from outside the cluster and routes those requests to the correct Kubernetes Service. For example, suppose we have multiple ASP.NET Core Web API services running inside Kubernetes:

- Product API

- Order API

- Payment API

- Notification API

Without Ingress, we may expose each application separately using different LoadBalancer Services. This can become difficult to manage and may also increase cost because each LoadBalancer Service can create a separate external load balancer in cloud environments like Azure.

With Ingress, we can expose multiple services using a single external entry point. For example:

- https://myapp.com/products can route traffic to Product Service

- https://myapp.com/orders can route traffic to Order Service

- https://myapp.com/payments can route traffic to the Payment Service

So, Ingress helps us manage external access in a cleaner and more controlled way. For a better understanding, please have a look at the following image:

Why Do We Need Ingress?

In real-time applications, we usually do not want users to access every service using different IP addresses or ports. Instead, we want a clean and professional URL-based access system. For example, users should access our application like this:

- https://api.mycompany.com/products

- https://api.mycompany.com/orders

- https://api.mycompany.com/payments

They should not access it like this:

- http://20.10.30.40:30001

- http://20.10.30.41:30002

- http://20.10.30.42:30003

Ingress helps solve this problem by providing proper routing rules for external traffic. Ingress can help us with:

- Routing external traffic to internal Services

- Using domain names instead of direct IP addresses

- Managing path-based routing

- Managing host-based routing

- Supporting HTTPS/TLS termination

- Reducing the need for multiple external LoadBalancer Services

- Providing a cleaner entry point for microservices-based applications

In simple terms, Services expose Pods, but Ingress controls how external users reach those Services via proper URLs.

How Ingress Works

Ingress itself only defines the routing rules. To make those rules work, we need an Ingress Controller. An Ingress Controller is the component that reads Ingress rules and handles incoming traffic. Without an Ingress Controller, the Ingress rules will not work. For example, in AKS, we can use an Ingress Controller such as:

- NGINX Ingress Controller

- Azure Application Gateway Ingress Controller

The Ingress Controller receives the external request, checks the Ingress rules, and forwards it to the correct Kubernetes Service. The Service then forwards the request to one of the available Pods.

So, the request flow is usually like this:

- User sends a request to the domain or URL

- Ingress Controller receives the request

- Ingress rules decide where the request should go

- Traffic is forwarded to the correct Service

- Service forwards traffic to the correct Pod

- Pod runs the application container and processes the request

For example:

- User calls https://api.myapp.com/products

- Ingress routes the request to Product Service

- Product Service forwards the request to one of the Product API Pods

This makes external access more organized and production-friendly.

Real-Life Analogy

Ingress is like the main security gate or reception desk of a large office building. Suppose a company building has many departments, such as Sales, Accounts, HR, IT Support, and Management. Visitors do not enter any department randomly. First, they come to the main reception desk. The receptionist checks why the visitor has come and then directs them to the appropriate department.

Similarly, in Kubernetes, external users do not directly access individual Pods. They send requests to the Ingress entry point. The Ingress Controller checks the rules and forwards the request to the correct Service.

For example:

- Product-related request goes to Product Service

- Order-related request goes to Order Service

- Payment-related requests go to the Payment Service

So, Ingress acts like a smart receptionist for external traffic entering the Kubernetes cluster.

Service vs Ingress

A Service provides a stable way to access Pods. It is mainly responsible for connecting traffic to the correct set of Pods. Services can be accessed from both internal and external locations, depending on the Service type.

Ingress is primarily used to manage external HTTP and HTTPS traffic. It provides advanced routing based on domain names and URL paths. Ingress usually works in front of Services.

For example:

- Service says: “These Pods belong to Product API.”

- Ingress says: “When the user calls /products, send the request to Product Service.”

So, Service is required to expose Pods, while Ingress is used to route external web traffic to those Services in a clean and controlled way.

Key Points to Remember:

- Ingress manages external HTTP and HTTPS traffic.

- Ingress routes user requests to the correct Kubernetes Service.

- Ingress helps expose applications using domain names and URL paths.

- Ingress requires an Ingress Controller to work.

- Service exposes Pods, while Ingress routes external traffic to Services.

- In AKS, Ingress is commonly implemented using NGINX Ingress Controller or Azure Application Gateway Ingress Controller.

What is a ConfigMap in Kubernetes?

A ConfigMap is used to store non-sensitive configuration values in Kubernetes. These are standard settings the application requires, but they are not confidential.

For example:

- Application environment name.

- Feature flags.

- API base URLs.

- Allowed origin URLs.

- Logging levels.

- Normal application configuration values.

In ASP.NET Core, we commonly use appsettings.json and environment variables. In Kubernetes, ConfigMaps help us provide environment-specific settings to Pods without rebuilding the Docker image.

Example values that can go into a ConfigMap:

- ASPNETCORE_ENVIRONMENT=Production

- Logging__LogLevel__Default=Information

- FeatureManagement__EnableNewDashboard=true

Why Do We Need ConfigMap?

The main benefit of ConfigMap is that it allows us to separate configuration from the application image. We should not create a different Docker image just because the API URL, environment name, or logging level changes.

For example, the same Docker image can be used in multiple environments:

- The same image can be used in Development with development settings.

- The same image can be used in Staging with staging settings.

- The same image can be used in Production with production settings.

So, the application image can remain the same, while the configuration can vary by environment.

What Should Not Go into ConfigMap?

ConfigMap should not be used for passwords, connection strings, API keys, JWT secrets, or any confidential value. Those values should be stored in a Kubernetes Secret or a production-grade secret management solution, such as Azure Key Vault, in AKS.

Real-Life Analogy

A ConfigMap is like a notice board in a classroom. The notice board contains general information such as class times, exam dates, teacher names, subject schedules, and classroom rules. Everyone can see it, and it does not contain confidential information. Similarly, ConfigMap stores normal application configuration values such as environment name, logging level, feature flags, and API base URLs.

Key Points to Remember:

- ConfigMap stores non-sensitive configuration.

- ConfigMap helps separate configuration from the application image.

- ConfigMap allows the same image to be used in multiple environments.

- ConfigMap values can be provided as environment variables or mounted as files.

- ConfigMap should not be used for passwords or confidential values.

What Is Secret in Kubernetes?

A Secret is used to store sensitive information in Kubernetes. Sensitive information means confidential values that should not be hardcoded in source code, Docker images, or plain configuration files.

For example:

- Database password.

- Connection string.

- API key.

- JWT secret.

- Email service password or token.

In real applications, we should not hardcode sensitive values inside:

- Source code.

- Dockerfile.

- Docker image.

- Plain YAML files committed to GitHub.

- Application configuration files.

Kubernetes Secret allows us to store sensitive values separately and inject them into Pods when required.

Why Do We Need Secrets?

Secrets help reduce the risk of exposing confidential values. For example, an ASP.NET Core Web API may need a SQL Server connection string. Instead of hardcoding the connection string in the application, we can store it as a Kubernetes Secret and pass it to the container as an environment variable or mounted file.

This makes the application safer and more flexible because the same Docker image can be used across environments while each environment provides its own secret values.

Important Note: Kubernetes Secret is preferable to hardcoding sensitive values, but it should not be treated as a complete secret-management solution on its own. In production AKS environments, prefer Azure Key Vault with managed identity or Workload Identity, proper RBAC, and encryption at rest.

Real-Life Analogy

A Secret is like a bank locker. General information can be posted on a notice board, but confidential items such as cash, documents, keys, and passwords should be kept in a locker.

Similarly, sensitive application values such as database passwords, connection strings, JWT keys, and API keys should be stored in Kubernetes Secrets rather than directly in source code or Docker images.

Key Points to Remember:

- Secret stores sensitive information.

- Secrets are used for passwords, keys, tokens, and connection strings.

- Secret helps avoid hardcoding confidential values.

- Secret is different from ConfigMap.

- In production AKS environments, Azure Key Vault is preferred for stronger secret management.

FAQs

Question 1: What is a Docker Image?

A Docker Image is a packaged version of an application. It contains the application code, runtime, libraries, dependencies, and instructions required to run the application. For example, an ASP.NET Core Web API Docker Image contains the Web API application files and the required .NET runtime.

So, in simple words:

- A Docker Image is a packaged application.A

- Docker Image contains everything required to run the application.The

- Docker Image itself does not run.

- When we run a Docker Image, it becomes a Container.

Example:

- Docker Image: Product API packaged application

- Container: Running Product API application

Question 2: What is a Container?

A Container is a running instance of a Docker Image. It runs the actual application process. For example, if we have an ASP.NET Core Web API Docker Image, running that image creates a Container. That Container runs the ASP.NET Core Web API application.

So, in simple words:

- A Docker Image is a packaged application.

- The container is the running application.

Example:

- Docker Image: Product API image

- Container: Running Product API application

Question 3: Does the Container run the application?

Yes. The Container runs the actual application. For example, if we containerize an ASP.NET Core Web API application, the application runs inside the Container. The Container contains or uses the required application runtime, libraries, dependencies, and application files needed to run the application.

So, we can say:

- The application runs inside the Container.

- The Container is responsible for running the application.

- Without the Container, our packaged application will not run.

Question 4: If the Container runs the application, then why do we need a Pod?

We need a Pod because Kubernetes does not directly manage standalone containers as the smallest deployment object. Kubernetes manages Pods.

A Pod acts as a Kubernetes wrapper around one or more containers. The container runs the application, but the Pod gives Kubernetes a standard unit to Schedule, Monitor, Restart, Delete, Recreate, and Manage.

So, the Container runs the application, but the Pod allows Kubernetes to manage that application properly.

In simple words:

- Container runs the application.

- Pod manages how that container runs inside Kubernetes.

Question 5: What is a Pod?

A Pod is the smallest deployable unit in Kubernetes. It is the Kubernetes object that contains one or more containers. Most of the time, a Pod contains a single main application container. For example, a Product API Pod typically contains a single Product API container.

So, the relationship is:

- Application is packaged as a Docker Image.A

- Docker Image runs as a Container.

- Container runs inside a Pod.

- Pod runs on a Worker Node.

Question 6: What do we mean by the smallest deployable unit in Kubernetes?

The smallest deployable unit means the smallest object that Kubernetes can create, schedule, run, restart, delete, and manage. In Kubernetes, we do not usually deploy a Container directly. We deploy a Pod. Kubernetes places the Pod on a Worker Node, and the Container runs inside that Pod.

So, when we say Pod is the smallest deployable unit, it means:

- Kubernetes schedules Pods, not standalone containers.

- Kubernetes starts Pods, and containers run inside those Pods.

- Kubernetes restarts or recreates Pods when required.

- Kubernetes gives IP addresses to Pods, not directly to individual containers.

- Kubernetes connects Services to Pods, not directly to containers.

Example:

If we want to run the Product API in Kubernetes, we do not say:

- Run this container directly.

Instead, Kubernetes creates a Pod, and inside that Pod, the Product API container runs.

Question 7: What is a Node?

A Node is a machine inside the Kubernetes cluster where Pods run. It can be a physical server, virtual machine, or cloud-based virtual machine. In AKS, a Node is usually an Azure Virtual Machine. The Node provides CPU, memory, storage, and networking resources required to run Pods.

So, in simple words:

- A node is a machine in the Kubernetes cluster.

- Pods run on Nodes.

- Containers run inside Pods.

- Applications run inside Containers.

- Nodes provide resources to run applications.

Example:

- Worker Node: Azure Virtual Machine

- Pod: Product API Pod running on that Worker Node

Question 8: Containers already contain the runtime environment, so what environment does the Pod provide?

This is a very important point. A Container contains the application runtime environment. For example, an ASP.NET Core container may contain the .NET runtime, application DLLs, libraries, and dependencies.

But a Pod provides the Kubernetes runtime context around the container. The Pod provides things such as:

- Network identity inside Kubernetes

- Pod IP address

- Shared network namespace for containers inside the same Pod

- Storage volumes that containers can use

- Lifecycle management

- Restart policy

- Configuration injection through ConfigMaps

- Secret injection through Kubernetes Secrets

- Health checking support using probes

- A unit that Kubernetes can schedule on a Worker Node

So, the Container provides the environment needed to run the application code, while the Pod provides the environment needed to run and manage that container inside Kubernetes.

Question 9: How are Docker Image, Container, Pod, and Node related?

The relationship is:

- A Docker Image is a packaged application.

- A container is the running instance of the Docker Image.

- Pod contains the Container.

- Node runs the Pod.

Flow: Docker Image → Container → Pod → Node

Example:

- Product API image becomes a Product API Container.

- Product API Container runs inside a Product API Pod.

- Product API Pod runs on a Worker Node.

Question 10: Can one Pod contain multiple Containers?

Yes, one Pod can contain multiple containers. But in most real-time applications, a Pod typically contains a single main application container. Multiple containers are used inside the same Pod only when they are tightly related and must work together.

For example:

- Main container: ASP.NET Core Web API

- Sidecar container: Logging agent or monitoring helper

Both containers inside the same Pod can share the same network and storage context.

Question 11: Who decides on which Node a Pod should run?

The Kubernetes Scheduler, part of the Control Plane, decides which Worker Node a Pod should run on. It checks available CPU, memory, node health, and scheduling rules before placing the Pod on a suitable Node.

Question 12: Can a Cluster have multiple Worker Nodes?

Yes. A Kubernetes Cluster can have multiple Worker Nodes. A Worker Node is a machine where application Pods run. In real-time production environments, we usually have multiple Worker Nodes so that Kubernetes can distribute workloads, handle more traffic, and improve availability.

For example:

- Worker Node 1

- Worker Node 2

- Worker Node 3

Together, these Worker Nodes form a single Kubernetes Cluster.

Why multiple nodes?

- To distribute workloads

- To handle more traffic

- To provide high availability

- To ensure fault tolerance

Question 13: Can a Worker Node run multiple Pods?

Yes. A Worker Node can run multiple Pods, depending on its available CPU, memory, storage, and networking capacity.

For example, one Worker Node can run:

- Product API Pod

- Order API Pod

- Payment API Pod

- Notification API Pod

If the Worker Node has enough resources, Kubernetes can schedule many Pods on the same Worker Node.

Conclusion:

Kubernetes Architecture becomes easy to understand when we learn it step by step. A Cluster provides the complete Kubernetes environment, Worker Nodes run Pods, Pods run Containers, and the Control Plane manages everything. Services and Ingress help users access the application, while ConfigMaps and Secrets provide configuration values. Together, these components help Kubernetes run applications in a scalable, stable, and production-ready manner.