SignalR has revolutionized the .NET ecosystem by abstracting the complexities of persistent HTTP connections into a seamless, bi-directional communication framework. For high-traffic platforms, implementing this technology requires more than simple hub configuration; it demands a deep understanding of backplane scaling, connection life-cycle management, and protocol fallback strategies. Mastering these elements is essential for developers aiming to deliver low-latency, “push-model” experiences that remain stable under extreme concurrent loads.

When architecting systems that demand instantaneous data delivery, looking at industry leaders in high-concurrency environments provides invaluable technical insights. Platforms optimized for live updates, such as the 1xbet india app, serve as prime examples of how real-time push notifications and odds-shifting data must be handled with millisecond precision. In such a dotnet tutorial, we see that the difference between a functional app and a world-class one often lies in how gracefully the SignalR implementation handles abrupt network switching and state synchronization. By studying these high-performance mobile integrations, developers can better understand the necessity of robust client-side retry policies and the strategic use of MessagePack over JSON to reduce payload overhead.

Scaling Beyond a Single Server: The Backplane Architecture

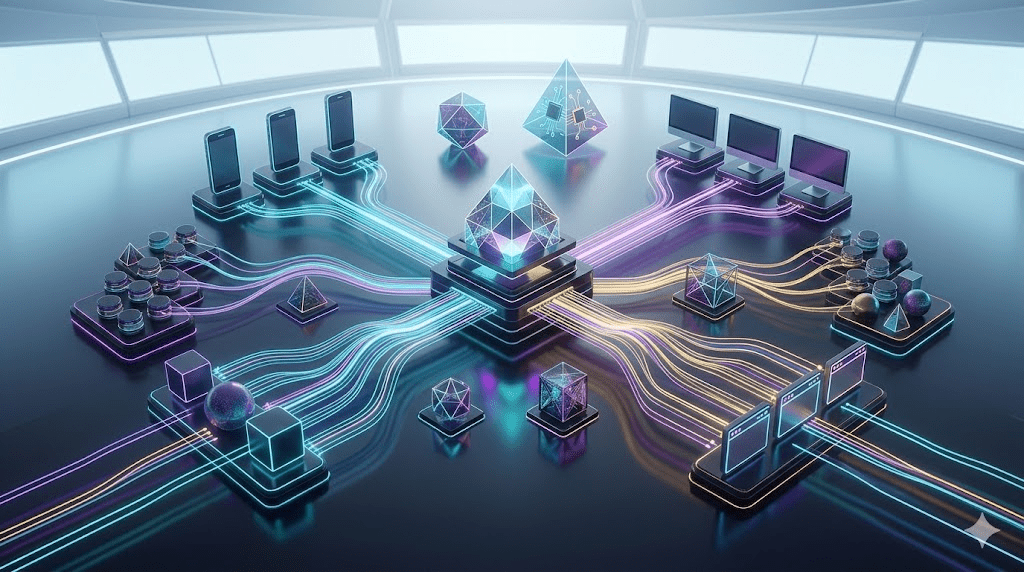

One of the most frequent hurdles in any dot net tutorial involving SignalR is the “Sticky Session” problem. Once your application grows beyond a single instance, a client connected to Server A cannot receive a message broadcast by Server B without a centralized distribution layer.

Essential Strategies for SignalR Scaling:

- Redis Backplane: Utilizing Redis Pub/Sub is the industry standard for synchronizing messages across multiple web servers, ensuring that a “SendToAll” command reaches every connected client regardless of their server affinity.

- Azure SignalR Service: For serverless or highly elastic workloads, offloading connection management to a managed service removes the CPU and memory burden from your application servers.

- Transport Optimization: While WebSockets are the gold standard, always ensure your environment supports Server-Sent Events (SSE) and Long Polling as fallback mechanisms to maintain 100% connectivity across diverse client environments.

This level of architectural rigor is what powers complex ecosystems like a premier 1xbet online casino, where thousands of simultaneous game states must be synchronized across a global user base without lag. The lesson here for developers is clear: build with horizontal scaling in mind from day one, rather than trying to “bolt it on” after hitting performance bottlenecks.

Optimization Techniques for High-Concurrency Hubs

Beyond infrastructure, the internal logic of your SignalR Hubs determines the ultimate responsiveness of your application. High-traffic lessons suggest a “lean hub” approach to minimize blocking calls and thread starvation.

Top Performance Optimization Steps:

- Asynchronous Everything: Ensure all hub methods and called services use Task-based asynchronous patterns to prevent thread-pool exhaustion during high-volume spikes.

- Group Management: Never iterate through all connections to find a specific set of users; leverage SignalR Groups for efficient multi-casting.

- Throttling and Buffering: Instead of pushing every single data change, buffer updates and send them in “ticks” (e.g., every 100-500ms) to reduce the number of small packets traversing the network.

Conclusion: The Real-Time Standard in 2026

Building real-time applications with .NET is no longer a niche requirement; it is a standard user expectation. By applying the lessons learned from high-traffic platforms—focusing on protocol efficiency, backplane stability, and asynchronous hub design—you can build systems that are both resilient and exceptionally fast. The goal of any modern developer should be to transform the static web into a living, breathing interface where data flows as naturally as conversation.